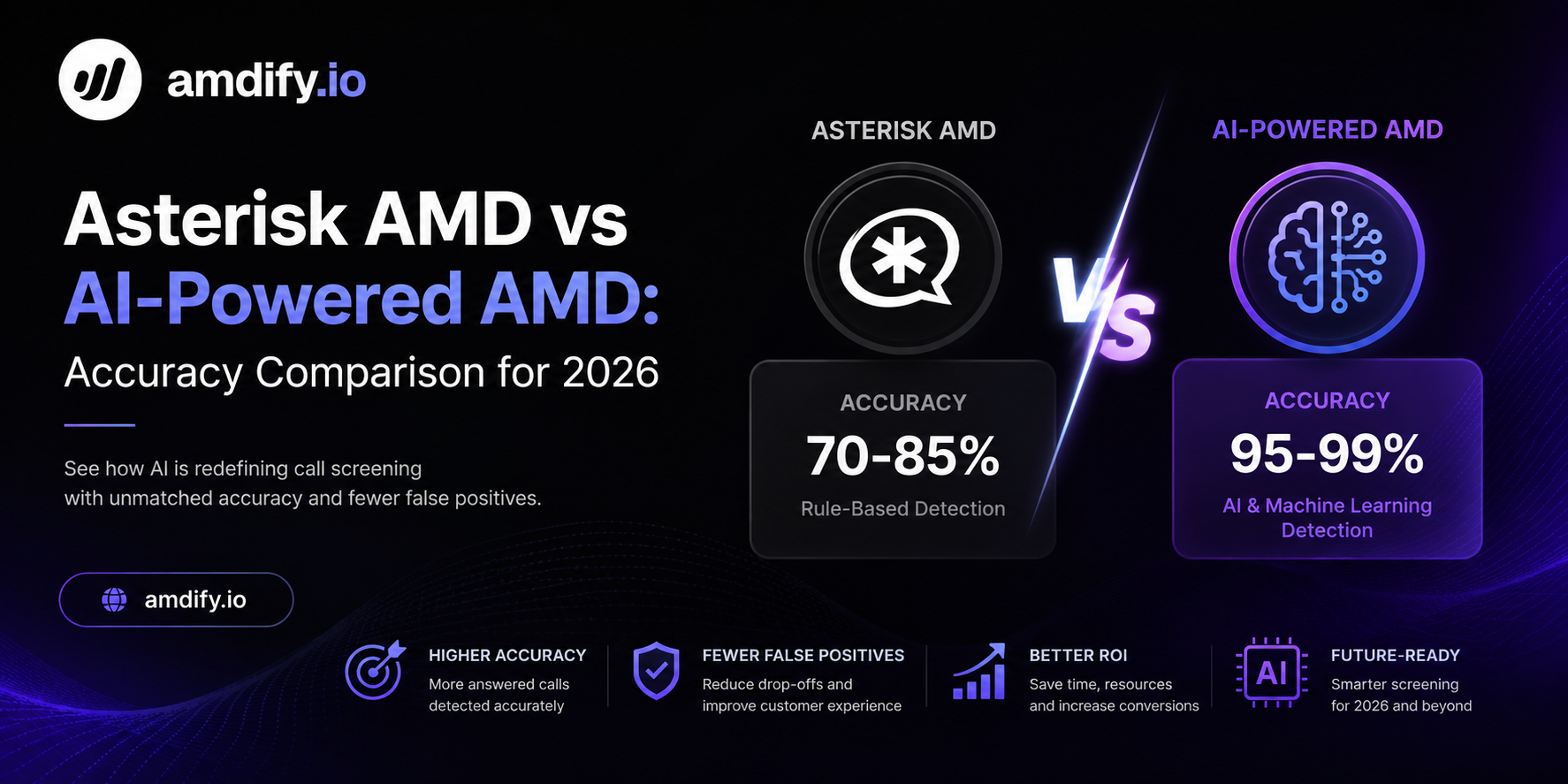

Asterisk AMD vs AI-Powered AMD: Accuracy Comparison for 2026

If you run a VICIdial or Asterisk-based call center, answering machine detection is one of the most consequential technical decisions you make. Get it wrong and you drop live calls. Get it right and every agent handles more productive conversations per shift.

For years, Asterisk's built-in AMD was the only practical option. In 2026, that is no longer true. AI-powered AMD has matured to the point where the accuracy gap is large enough to directly affect campaign economics.

This comparison covers how each approach works, where the accuracy differences come from, and what the numbers look like in a real dialing environment.

How Asterisk AMD Works

Asterisk's AMD application uses a rule-based heuristic approach. When a call connects, it records the first several seconds of audio and analyzes:

- Initial silence duration — long silence at the start suggests a machine is processing before playing a greeting

- Word burst length — voicemail greetings typically contain longer uninterrupted speech segments than human answers

- Total silence vs. speech ratio — machines tend to speak in one continuous block followed by a beep

- Beep detection — a tone following extended speech is a strong machine indicator

These heuristics are configured through parameters in amd.conf:

[general]

initial_silence=2500

greeting=1500

after_greeting_silence=800

total_analysis_time=5000

min_word_length=100

between_words_silence=50

maximum_number_of_words=3

silence_threshold=256

The challenge is that these parameters were calibrated for a specific telephony environment — one that no longer exists for most operations.

How AI-Powered AMD Works

AI-powered detection replaces static rules with a neural model trained on millions of classified call recordings. Instead of checking predefined thresholds, the model analyzes the full acoustic signature of the greeting and outputs a classification — along with a confidence score.

The model evaluates:

- Acoustic patterns in speech onset and rhythm

- Natural language characteristics of human vs. machine responses

- Background noise signatures typical of carrier processing

- Temporal patterns that distinguish rushed human answers from recorded greetings

- Short responses that rules-based systems miss ("Hello?" at sub-300ms)

Because the model was trained on current data, it handles modern carrier audio processing, iOS 26 voicemail behavior, and international greeting formats without manual tuning.

Accuracy Comparison

Based on real-world testing across US outbound campaigns in Q1 2026:

| Metric | Asterisk AMD | AI-Powered AMD (amdify.io) |

|---|---|---|

| False Positive Rate | 15–25% | 1–3% |

| False Negative Rate | 8–12% | 2–4% |

| Overall Accuracy | 72–80% | 95–99% |

| Detection Latency | 3–5 seconds | 200–400ms |

| iOS 26 Voicemail Accuracy | ~55% | ~97% |

| Short Human Response Detection | Poor | Excellent |

| Carrier Audio Processing Handling | Poor | Excellent |

| Tuning Required | Ongoing manual | None |

The false positive rate is the number that matters most for revenue. A 20% false positive rate means 1 in 5 connected live humans is being dropped before agent delivery. Those calls counted as dials in your cost structure but produced zero conversations.

Where Asterisk AMD Breaks Down in 2026

iOS 26 Voicemail Behavior

Apple's iOS 26 introduced significant changes to how voicemail greetings play back. The new system plays a shorter, synthesized greeting with different acoustic characteristics than previous versions. Asterisk AMD was not trained on this data and misclassifies a substantial percentage of iOS 26 voicemails as live humans.

This drives false negatives — agents get connected to voicemail greetings and have to manually detect and drop the call, wasting handle time.

Carrier-Side Audio Processing

Modern VoIP carriers apply aggressive audio processing — noise reduction, level normalization, echo cancellation — that changes the acoustic properties of both human and machine audio. The threshold parameters in amd.conf were tuned against pre-processed audio and produce incorrect results when the signal characteristics shift.

Short Human Responses

"Hello?" delivered in under 300ms, answered from a noisy environment, or spoken with a non-standard accent falls outside the word burst length parameters that Asterisk uses to classify as human. These calls get classified as machines and dropped.

International Numbers

Non-US voicemail greeting formats differ significantly in speech rate, greeting length, and beep characteristics. Asterisk AMD performs especially poorly on international campaigns without country-specific parameter sets — which require manual maintenance.

The Revenue Impact

Here is what the accuracy gap costs on a mid-size operation:

| Campaign Scale | Daily Dials | Connect Rate | Asterisk AMD False Positives | AI AMD False Positives | Recovered Conversations/Day |

|---|---|---|---|---|---|

| Small (1 campaign) | 5,000 | 12% | 150 dropped | 18 dropped | +132 |

| Medium (3 campaigns) | 15,000 | 12% | 450 dropped | 54 dropped | +396 |

| Large (8 campaigns) | 40,000 | 12% | 1,200 dropped | 144 dropped | +1,056 |

At a conservative $40 revenue per live conversation, recovering 396 conversations per day is worth $15,840 daily or roughly $475,000 per month for a medium-sized operation.

Detection Speed Comparison

Latency matters differently depending on your architecture:

Asterisk AMD requires 3–5 seconds of audio analysis before returning a classification. During this window, the call is held in an analysis state. On a predictive dialer running tight pacing, this delay contributes to abandonment rate problems.

AI-powered AMD returns a classification in 200–400ms. This means agent delivery happens nearly instantaneously on HUMAN classifications. Abandon rate stays well below the 3% regulatory threshold even under aggressive pacing.

Integration Complexity

This is where Asterisk AMD has a genuine advantage: it is already built in. There is no additional infrastructure, no API dependency, no external latency risk.

However, the integration overhead for AI-powered AMD is lower than most operators expect. For VICIdial specifically:

- Disable built-in AMD in System Settings

- Install a small AGI script that posts audio to the API

- Modify the dialplan to route based on API response

- Total implementation time: 30–60 minutes

The ongoing operational difference is significant: Asterisk AMD requires continuous parameter tuning as call patterns and carrier behavior change. AI-powered AMD requires no ongoing tuning — the model handles variation automatically.

When Asterisk AMD Is Still Acceptable

Asterisk AMD remains a reasonable choice in specific scenarios:

- Very low call volumes where the economics of improvement are small

- Air-gapped environments that cannot make external API calls

- Development and testing where accuracy is not the priority

- Simple domestic campaigns with highly predictable call patterns and modern carrier equipment

For any operation running more than 500 dials per hour on production campaigns, the accuracy difference justifies the migration.

Choosing an AI AMD Provider

Not all AI-powered AMD solutions are equivalent. Key evaluation criteria:

Accuracy on your specific traffic: Request a proof-of-concept period with your actual call data. Published accuracy numbers are averages — your traffic may be easier or harder to classify than the benchmark set.

Latency under load: Sub-200ms average latency is easy to achieve at low volume. Test at your peak call rate to confirm performance holds.

Fail-safe behavior: When the API is unreachable or times out, what does the system do? It should default to HUMAN to avoid dropping live calls, not MACHINE.

VICIdial/Asterisk integration support: Some providers offer documentation and support specifically for Asterisk-based deployments. Others assume a more custom integration.

amdify.io is built specifically for VICIdial and Asterisk environments — it ships with a pre-built AGI script, supports the standard Asterisk AGI interface, and provides a dedicated integration guide for common VICIdial configurations.

Conclusion

The accuracy gap between Asterisk AMD and AI-powered AMD has widened significantly in 2026. iOS 26 voicemail changes, carrier audio processing, and the increasing diversity of call patterns have pushed rules-based detection further from acceptable performance thresholds.

For most outbound operations, the question is no longer whether to upgrade — it is how quickly to schedule the migration. The revenue impact from recovered live conversations is substantial, the integration is straightforward, and the ongoing maintenance burden actually decreases when you remove the need to continuously tune amd.conf parameters.

If you are currently accepting 15–25% false positive rates as the cost of doing business, you are leaving significant revenue on the table.